Data Privacy Brasil participates in UN’s OHCHR briefing on Brazil

The organization highlighted how the advance of edtech has been violating children’s privacy in the country

Data Privacy Brasil Research Association submitted in May a joint contribution with Privacy International and InternetLab to the United Nations’ Human Rights Office (OHCHR) in advance of the third periodic report of Brazil on the implementation of the International Covenant on Civil and Political Rights during the 138th session of the UN Human Rights Committee.

The submission calls attention to violations to children’s privacy and data protection rights in the use of educational technologies. It discusses the state of the art of the use of technologies in Brazilian public basic education; the use of applications that use AI and the associated risks; and the use of facial recognition technologies in schools and their problems. The submission draws from Data Privacy Brasil’s research project AI in the classroom: models of participation for the school community.

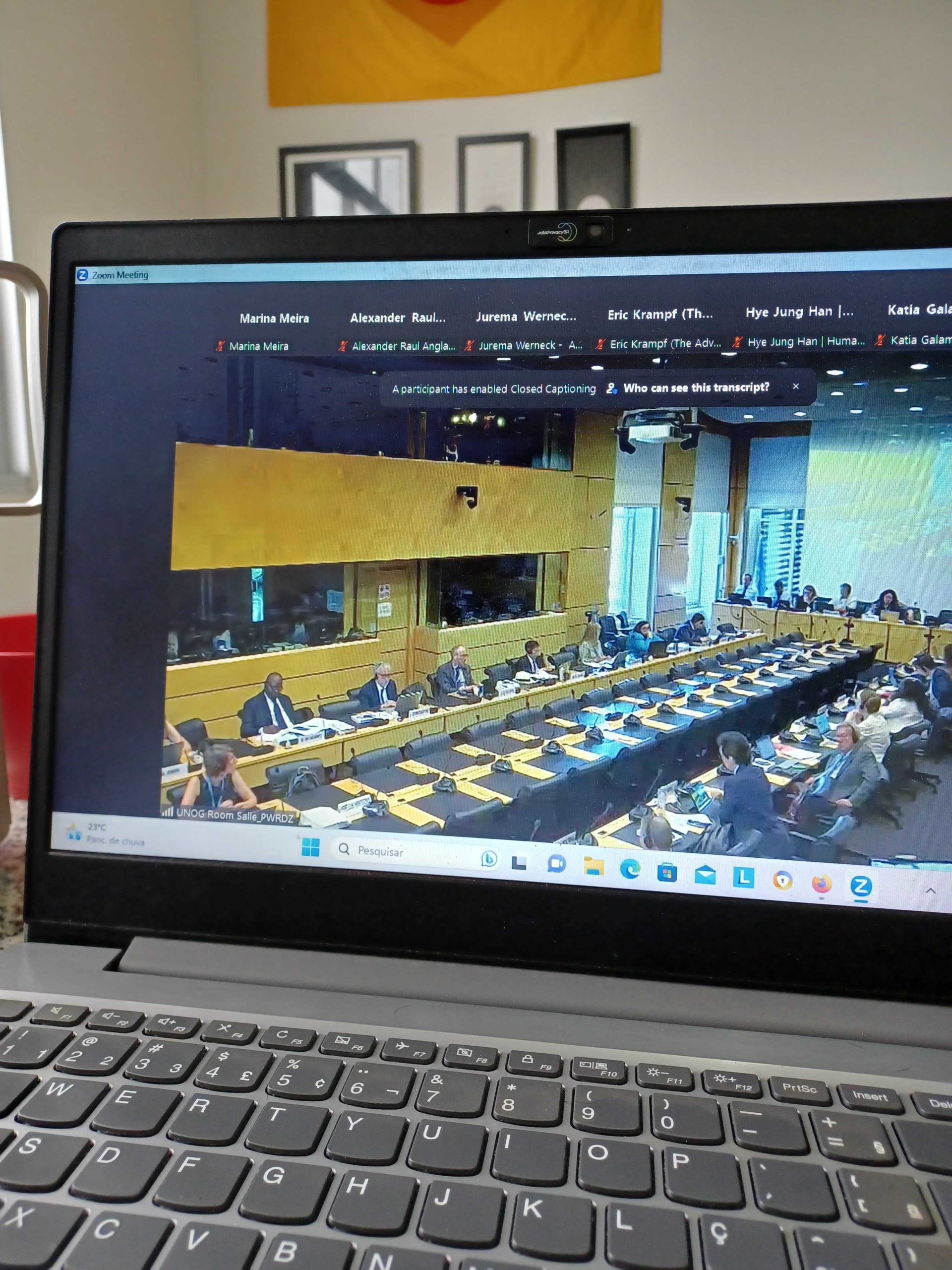

On June 26th, Marina Meira, Head of Projects of Data Privacy Brasil, participated in the OHCHR’s formal and informal briefings on Brazil, in order to present to the members of the UN’s Office the human rights violations that have been faced by Brazilian children in the use of edtech. Data Privacy’s oral contribution to the debate was the following:

My statement is in regards to the submission presented by Data Privacy Brasil Research Association jointly with Privacy International and InternetLab. I will highlight two ongoing processes in Brazil that have been posing severe violations to children’s privacy in the educational context, thus violating article 17 of ICCPR.

The first process is the spread of artificial intelligence tools in schools all over the country without any prior risk assessments, safeguards or considerations to the fact that children are going under a developmental stage. AI edtech often relies on the mining of a great amount of personal data and is also often associated with biases that can cause discrimination and exacerbate inequalities.

The second process is how the procurement of educational technologies has been conducted. The State in different federative levels, especially after the start of the pandemic, has been acquiring edtech based on what is most cost effective, without assessing if the resources protect students’ and teachers’ privacy and human rights. And private companies have been benefiting from an undue interpretation of Brazil’s Procurement Law as if they didn’t profit through the intense harvesting of student data.

Given this worrisome scenario, we recommend the UN Human Rights Committee call on Brazil to:

(i) Regulate edtech, establishing strong safeguards to protect children’s privacy and best interests;

(ii) Regulate AI and consider for such regulation that operations that involve children’s data must be given special attention, so that edtech that uses AI is regulated to reduce harms and make systems more transparent and auditable;

(iii) And adhere to formal public procurement processes when awarding a contract to an edtech company, while also providing training to educators and public administrators in data protection to enable them to evaluate the risks and benefits of edtech beyond usability.

The full submission can be accessed here.

Veja também

-

Data Privacy Brasil launches the AI Harm Library

Our repository systematizes evidence of harm caused by AI systems and reveals that nearly 29.6% of the documented harms directly affect psychological and social well-being. Learn more about the new initiative!

-

Data Privacy Brasil participates in international seminar on Brazil’s Digital Statute for Children and Adolescents

Yesterday, Data Privacy Brasil took part in the seminar “Online Safety for Children Statute: Lessons from the Brazilian Experience”, organized by Digital Action and Conectas Human Rights. Check out our report featuring the key highlights from the event.

-

Brazilian Senate approves Landmark Digital Child Protection Bill: what are the next steps?

On 27 August 2025, Brazil's Senate approved Bill PL 2628/2022, focused on protecting the rights of children in digital platforms. What happens now?

-

Artificial Intelligence in Focus: A Comparative Analysis of the BRICS and FOC Declarations on AI Governance

The BRICS Leaders' Declaration on Global Governance of Artificial Intelligence has gained prominence in both Brazilian and international media, as it represents a joint position by Global South countries in the ongoing contest over this emerging technology.

-

AI Explainability: How to Avoid Rubber-Stamping Recommendations

Experts debate if human oversight can replace the need for the explainability of AI systems. Bruno Bioni, co-director of Data Privacy Brasil, was one of the experts interviewed. Check it out in the text!

-

Digital Merger Watch: Data Privacy Brasil joins new global initiative

The group includes national and international organizations and will focus on analyzing and challenging Big Tech’s efforts to reinforce its dominance through mergers and acquisitions.

-

Beyond Digital Rights: Towards a Fair Information Ecosystem?

One of the major challenges in the field of digital rights is the tendency toward segmentation and hyper-specialization in topics such as privacy, freedom of expression, net neutrality, data protection, and the regulation of AI systems. Learn more about the topic in the article published in Tech Policy.

-

Global AI governance: Taking action with civil society

More than 40 civil society organizations call for inclusion in AI Action Summit in letter on global governance of Artificial Intelligence.

Veja Também

-

Data Privacy Brasil launches the AI Harm Library

Our repository systematizes evidence of harm caused by AI systems and reveals that nearly 29.6% of the documented harms directly affect psychological and social well-being. Learn more about the new initiative!

-

Brazilian Senate approves Landmark Digital Child Protection Bill: what are the next steps?

On 27 August 2025, Brazil's Senate approved Bill PL 2628/2022, focused on protecting the rights of children in digital platforms. What happens now?

-

Artificial Intelligence in Focus: A Comparative Analysis of the BRICS and FOC Declarations on AI Governance

The BRICS Leaders' Declaration on Global Governance of Artificial Intelligence has gained prominence in both Brazilian and international media, as it represents a joint position by Global South countries in the ongoing contest over this emerging technology.

-

AI Explainability: How to Avoid Rubber-Stamping Recommendations

Experts debate if human oversight can replace the need for the explainability of AI systems. Bruno Bioni, co-director of Data Privacy Brasil, was one of the experts interviewed. Check it out in the text!

-

Digital Merger Watch: Data Privacy Brasil joins new global initiative

The group includes national and international organizations and will focus on analyzing and challenging Big Tech’s efforts to reinforce its dominance through mergers and acquisitions.

-

Beyond Digital Rights: Towards a Fair Information Ecosystem?

One of the major challenges in the field of digital rights is the tendency toward segmentation and hyper-specialization in topics such as privacy, freedom of expression, net neutrality, data protection, and the regulation of AI systems. Learn more about the topic in the article published in Tech Policy.

-

Global AI governance: Taking action with civil society

More than 40 civil society organizations call for inclusion in AI Action Summit in letter on global governance of Artificial Intelligence.

-

The Artificial Intelligence Legislation in Brazil: Technical Analysis of the Text to Be Voted on in the Federal Senate Plenary

The Internal Temporary Artificial Intelligence Committee (CTIA) of the Federal Senate approved the substitute report for Bill 2338/2023. This bill aims to define the legal framework for regulating the use of Artificial Intelligence systems in Brazil.

-

AI in the 2024 Brazilian elections

Aláfia Lab, *desinformante and Data Privacy Brasil launch the report “AI in the 2024 Brazilian elections”, with an analysis of the use of artificial intelligence in the first round of the elections.

-

A Fragmented Landscape Is No Excuse for Global Companies Serious About Responsible AI

For the third year in a row, MIT Sloan Management Review and Boston Consulting Group (BCG) have brought together an international panel of AI experts to help gain insights into the lack of alignment around global standards and norms for responsible AI. Bruno Bioni, co-director of Data Privacy Brasil, was one of the experts interviewed. Check it out in the text!

-

Research Project Outcomes: A vision for inclusive educational technology

Check out Júlia Mendonça's interview for Tech Ethics Lab, about the use of technology in schools.

-

Key Themes in AI Regulation: The local, regional, and global in the pursuit of regulatory interoperability

Data Privacy Brasil releases its report “KeyThemes in AI Regulation: The local, regional, and global in the pursuit of regulatory interoperability,” supported by the Heinrich Böll Foundation. This work is the result of months of research under the project “Where the SabIA Sings: Governance and Regulation of Artificial Intelligence from Brazil”.

DataPrivacyBr Research | Content under licensing CC BY-SA 4.0